Authored by Dave Stauffacher, Chief Platform Engineer at Direct Supply. With a background in data storage and protection, Dave has helped Direct Supply navigate a 30,000% data growth over the last 15 years. Dave has showcased his cloud experience in presentations at the AWS Midwest Community Day, AWS Re:Invent, HashiConf, the Milwaukee Big Data User Group, and other industry events. Dave is a certified AWS Solutions Architect Associate.

In April, AWS released a new type of AWS Storage Gateway called the Amazon FSx File Gateway. Similar to the AWS Storage Gateway products that provide a convenient on-premises front-end for Amazon Simple Storage Service (Amazon S3) storage, the FSx File Gateway provides a local front-end for an Amazon FSx for Windows File Server file system.

When I was interviewed on the AWS Podcast, episode number 439, “Introducing Amazon FSx File Gateway,” I talked through my primary use case for the FSx File Gateway. In short, I have two user populations that require low-latency file storage for use with video, photo, CAD and 3D design workflows. They currently rely on an aging scale-out network attached storage (NAS) environment in our data center. With the majority of my resources already moved out to AWS, I wanted a way to lifecycle the data center NAS that supported my cloud-first strategy, met my performance needs, and didn’t break the bank.

Before I recommended the Amazon FSx File Gateway as a replacement to my data center NAS infrastructure, I needed to verify that it would deliver a positive experience for my on-premises end-user workloads and have a lower total cost of ownership when compared to buying a replacement NAS. In this blog, I walk you through my performance testing method and what I learned about using the FSx File Gateway from the results. I also want to share the financial benefits I was able to achieve by moving to an FSx File Gateway architecture.

Background and requirements

My current data center NAS environment includes a performance data collection and reporting app that I used to profile my existing workloads and storage consumption. For the purposes of this exercise, I was interested in profiling the current usage to develop safe operating parameters against which the FSx Gateway could be compared. Based on the available performance data, my needs are as follows:

- Throughput needed is <400Mb/s; average is 50Mb/s (6.25MB/s).

- Read traffic = 83%, Write traffic = 17%.

- Average 1100 IOPS during business hours.

- Average disk latency <2ms.

- The current data set is 80TB, with an estimated 5% growth per year.

- Clients are all Windows and Mac workstations connecting with SMBv2 or SMBv3.

- My data center is connected to AWS over a 1 Gb AWS Direct Connect.

My NAS is made up entirely of spinning disk. Data is stored redundantly across NAS cluster nodes. I operate my NAS in a multi-tenant configuration, with two of my three existing tenants accounting for 80% of business-hours traffic. My proposed architecture includes moving each tenant to a separate FSx for Windows File Server file system, fronted by an FSx File Gateway virtual appliance.

Testing

To ensure the FSx File Gateway would meet and exceed my current workload requirements, I ran the gateway through several performance tests. I compared different network and cache disk configurations to predict what my end users would experience.

I then ran the same performance tests from a local client to my existing NAS environment and the FSx file system without the gateway. I wanted to baseline my current environment with the same tests and understand how a local client in my data center using an FSx for Windows File Server file system in the cloud performed under these same tests without introducing a local gateway. In the event I lost my data center, I would redirect traffic from local clients straight to FSx for Windows File Server file system directly, so it was important that I understand what this means for user workflows.

There are several tools that can be used to test performance of a file system. My preferred method is to use a Windows command line tool, diskspd.exe. Based on my NAS performance metrics, it was easy to script out extended-run file system tests that resemble my production workloads.

The basic command I used to run diskspd.exe against each of my file systems looks like this:

diskspd.exe -c20M -b4k -si -d600 -w50 -F8 -L -Srw \\target-file-path\diskspeed.dat

Where:

-c20Mis the test file size. I ran tests with file sizes ranging from 256 KB to 100 MB.-b4kis the block size. I ran tests with block sizes of 4 KB, 64 KB and 128 KB.-d600is the duration of each test, in seconds.-w50is the percentage of write activity. My tests covered:- 25% write / 75% read

- 50% write / 50% read

- 75% write / 25% read

- 85% write / 15% read

-F8is the number of files to use for each test.-sisets the sequencing of the writes. Other tests used -r for random writes.-Lincludes latency statistics-Srwdisables local caching, forcing all read/write activity to hit the target file system.

I ran these tests from several different sources, including Windows client workstations and on-premises Windows 2008, 2012, and 2016 servers.

My FSx File Gateway environments

Being a storage guy, I wanted to understand the impact that my on-premises storage configuration would have on my FSx File Gateway. I have both SSD and spinning disk available on my SAN, so I created two gateways – one on each type of storage. I used the AWS Open Virtualization Archive (OVA) gateway image and deployed both gateways using the same configuration. Following the documentation, each gateway VM was deployed with 4 vCPUs, 16 GB RAM, and 150 GB of cache disk.

For FSx for Windows File Server file systems, I created two identical high-availability environments. I built them with the minimum amount of disk required (2000 GB HDD storage) and joined them to the same Active Directory domain. The only difference was the availability zone in which the primary node was located.

Baseline from local clients to on-premises NAS

My data center NAS was originally configured to handle more workloads than it does today. In many ways, it is over-sized for the current data set. It came as no surprise that it performed well in my tests. The file system performed best when writing the largest block size tested (64 KB). On average, a single client is capable of sustained throughput of 70 MB/s, which is more than 10 times the average throughput needed across the entire NAS environment. Latency was also expectedly low, averaging just above 2 ms.

Accessing Amazon FSx for Windows File Server file system directly from on-premises clients

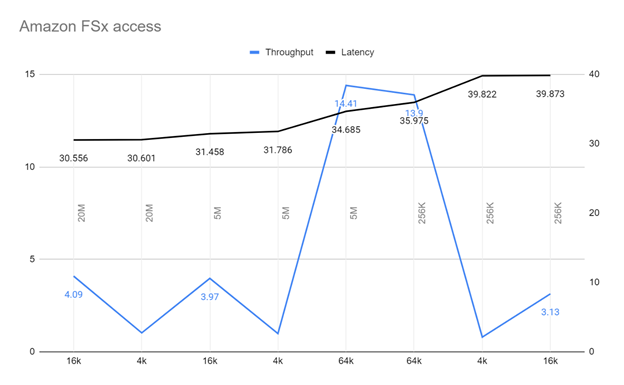

To connect from my campus to an FSx for Windows File Server file system running in the US-East-1 region, the traffic has to traverse a 1 Gb/s Direct Connect link. I expected I would see some performance impact, and I did. In testing, I was able to average 5 MB/s throughput writing directly to FSx for Windows File Server file system. Based on the average needs of my NAS, a single client is capable of generating 80% of the throughput my environment needs for most operations. Given the number of network hops and the distance the data travels, latency was a concern when accessing data directly from FSx for Windows File Server file system, averaging 34 ms. It is important to note that we did not see the same results when testing from EC2 instances running in our AWS account.

Accessing Amazon FSx File Gateway from on-premises clients

I expected that an FSx File Gateway, deployed to my on-premises VMWare environment, would improve throughput when compared to accessing a native FSx for Windows File Server file system. What I didn’t expect was that the FSx File Gateway would boost throughput by up to 10x! Average throughput from my on-premises clients was 50 MB/s, peaking at 123 MB/s. Latency also shared the same 10x improvement, averaging 3.7 ms. This is far more than my on-premises workloads require.

Financial analysis

The data center NAS I’m currently using is nearing the vendor’s end of support, meaning that finding a replacement solution is unavoidable. To meet my minimum requirements for performance and capacity, I expect to spend $150,000 on new hardware, and another $45,000 total on support for a data center solution that would meet my capacity needs for the next 5 years. This price estimate doesn’t include any backup or disaster recovery solution costs such as hardware, software, or secondary datacenter resources to protect my NAS infrastructure. Factoring these costs in to my budget would more than double the expected spend.

My proposed FSx for Windows File Server configuration estimate is based on using HDD storage. I chose HDD storage for two reasons: First, my end users only need low-latency access to data supporting current projects, meaning most of the data we’re storing is infrequently accessed. Second, any FSx File Gateway I deploy will be responsible for delivering frequently used data quickly, and will be sized such that all current projects should be accessed from the local cache. For the times older data needs to be accessed, the response time of HDD storage will meet the needs of my end-users.

Based on the 5% growth rate estimate, the 80 TB of data currently stored will grow to 105 TB over 5 years. Each of my 3 file systems will be set to 32MB/s throughput, meeting the minimum throughput requirements for using FSx for Windows File Server file system with an FSx File Gateway. I’m basing the pricing on a single-AZ configuration to better match the capabilities of an on-premises NAS solution. I’m also planning on using a single gateway for the three file systems (a single gateway can support up to five file systems!). The cost breakdown for the 3 file systems and gateway I created looks like this:

| File system | Size (GB) including 5 years growth | Throughput | Storage cost $0.013 per GB-month | Throughput cost $2.20/MBps/ month | Total cost/month |

| Tenant 1 | 53248 | 32 MB/s | $692.22 | $70.40 | $762.62 |

| Tenant 2 | 25600 | 32 MB/s | $332.80 | $70.40 | $403.20 |

| Tenant 3 | 25600 | 32 MB/s | $332.80 | $70.40 | $403.20 |

| Gateway cost ($0.69/hr) | $503.70 | ||||

| Total monthly | $2072.72 |

A table comparing the size, throughput, storage cost, throughput cost, and total cost for three file systems as well as the total monthly cost for all three

Over 60 months, or the expected lifetime of any on-prem NAS environment I would purchase, I would be paying $124,363 in gateway, storage, and throughput fees.

Running FSx File Gateway in my existing VMWare environment will not generate any additional hardware or storage expense. The move to AWS created a surplus of capacity in my VMWare environment that I’ll use for the appliance virtual machines.

The FSx File Gateway based solution will save 36% when compared to the cost of an on-premises NAS environment. Given that these costs were based on the maximum storage needed over 5 years, I’ll see further savings by growing the file system over time.

There is an option to purchase a physical AWS Storage Gateway Hardware Appliance that could be used in place of the VM gateway appliance. While there is a cost for the hardware appliance, they are perfect for environments that won’t have the virtual infrastructure capacity to host FSx for Windows File Server combined with the FSx File Gateway. There are two models from which to choose, one with 5 TB of cache, and one with 12 TB of cache. If I based my system on the hardware appliances, I would still save 30% or 27% depending on the size appliance I deploy.

Business value

Beyond performance and cost, it’s important to consider the strategic advantages this solution brings to my business. For my use case, the value is in the data being stored, and not in my ability to manage a storage system. My customers are not better served because I spent a maintenance window replacing a failed piece of hardware. In fact, I would argue it’s the opposite. By leveraging Amazon FSx for Windows File Server to store my data, and an FSx File Gateway to deliver high-performance data access, I’m eliminating NAS administration work, allowing me to focus on other, higher value endeavors.

It’s also important to understand how a file system architecture fits into a bigger IT strategy.

This is where debates between the value of cloud and data center architectures are hashed out. For my business and our needs, the agility and scalability that comes with building in the cloud exceeds anything I could build in my own data center. It’s also worth considering that moving to an FSx for Windows File Server file system allows me to seamlessly integrate with other tools I’m already using, like AWS Backup.

If, like me, you have adopted a cloud-first strategy for your business, I’m sure you’ll find many more ways in which Amazon FSx for Windows File Server and FSx File Gateway will benefit and support your larger business goals.

Conclusion

In this blog post, I reviewed my process and results for testing the FSx File Gateway for use in my business. I discussed the baseline requirements for a NAS platform and showed how the FSx File Gateway delivers a 10x performance boost over directly accessing FSx for Windows File Server file systems for my on-premises end-users. I also walked you through my financial review of Amazon FSx for Windows File Server and FSx File Gateway architecture to deliver a lower TCO when compared to traditional data center NAS environments. Lastly, I discussed the strategic advantages that come with implementing this architecture.

I hope you found this blog post helpful, and you’ll be able to use some of my evaluation process to validate how well the FSx for Windows File Server and FSx File Gateway can work for your NAS workloads. Thanks for reading this post. Please feel free to leave a comment or question in the comments section.

You can learn more by contacting your dedicated AWS account team at aws-california-team@amazon.com.